Overview of a musical composition environment

by Stéphane Rollandin

Here you will find the presentation of a my personal set-up for musical composition.

Introduction

I am an illiterate computer music student. I can not read music, neither play any instrument or sing. Most musical composition software assume a basic knowledge of music that I do not have, thus I have been driven to program my own tools for composing. They are presented in this page.

The reason of this writing is to share with other similar-minded people my ideas and actual softwares, which are all open-source. They come as code written either in Lisp, in Smalltalk or in the Keykit language.

Reproducing part or all of this composition environment requires many different open-source programs. My own code builds only on top of them, as there is no need to reinvent what was done, most often amazingly well, by other people.

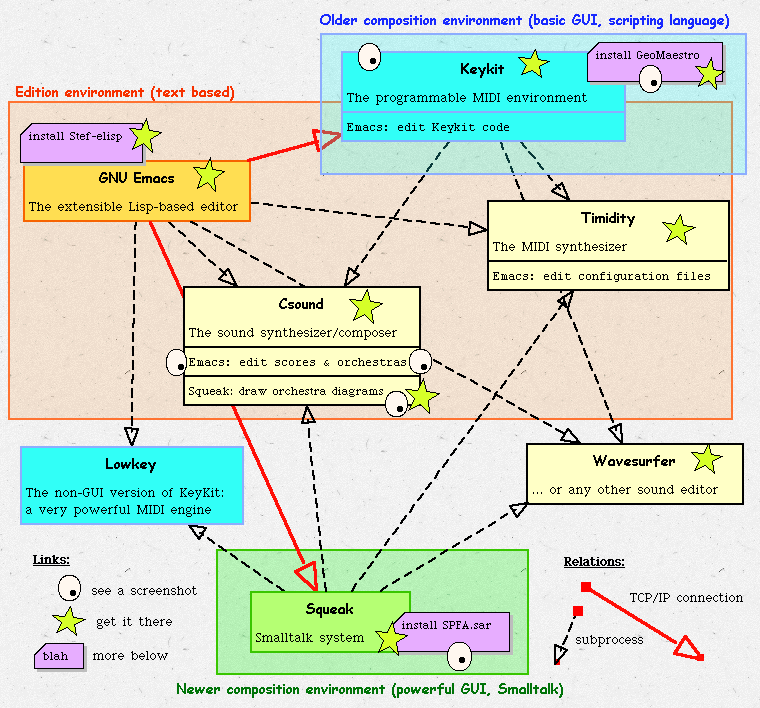

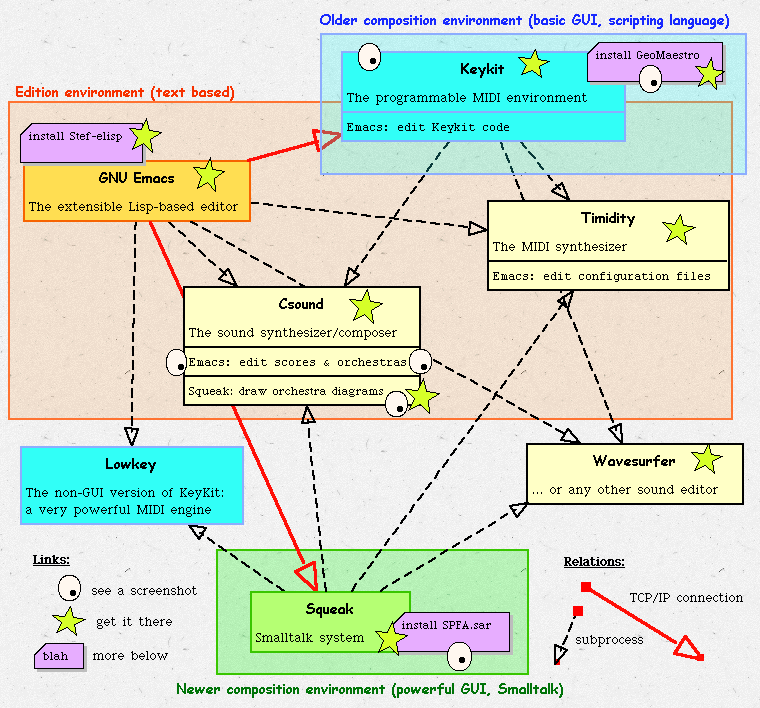

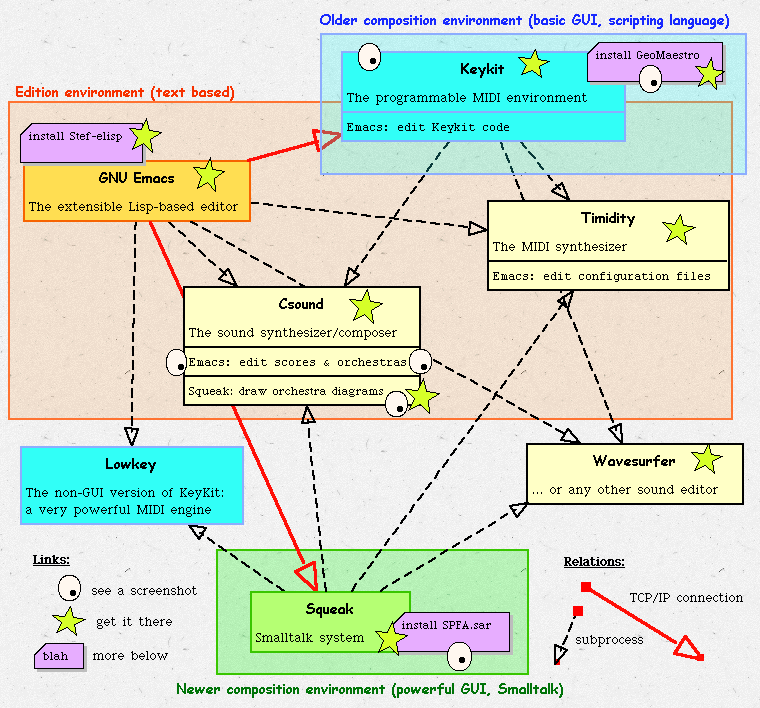

Here is a synthetic map of my current system. It features active links to screenshots and download pages:

.

Why open-source, multi-platform, high-level languages ?

Open source is a requirement for me. First of all I have no money to put into musical software. Then I want a total control of my tools. Also, very often open source code is easily portable across platforms. My OS is currently Windows XP, but soon or later I know that Linux will replace it, and I want my tools to be ready for this change.

High-level interpreted languages (Lisp, Smalltalk, Keykit) have my preference since they free the user/composer from the compilation step, making the code modifyable on the fly and thus keeping the whole environment alive. They also make complex musical ideas much more easy to implement than, say, C. Finally, they are also more portable than low-level code.

KeyKit/GeoMaestro

This has been my first composition environment. Keykit by itself is a major place for MIDI composition. It provides easy manipulation of musical phrases, easy programming of algorithmic composition procedures. It comes with many toys allowing real-time interaction with automatically generated material.

This was not what I was into, though, so I developped a non-realtime composition method, based on a specific, 2-dimensional, representation of notes, where rythm is described as a set of geometrical objects and their variations, while the interaction between these objects and the static 2D notes is highly customizable. This idea was called GeoMaestro, but the eventual code also includes another environment, called the Composer, where boxes representing arbitrary musical data can be linked together to form a whole structured composition.

At this stage I was dealing with a lot of non-MIDI data, such as sound files and Csound scores and orchestras, so Keykit became limited and I decided to continue this developement with Squeak. Still, KeyKit is by far the best place for doing anything MIDI, so I kept it tightly integrated with the other environments.

Emacs Lisp code

Developing GeoMaestro implied writing a lot of code. This was done with Emacs, using a specific mode which notably allows a direct control of Keykit through Emacs, bypassing the Keykit console.

Working with Csound also implies writing a lot of code. This lead to the developement of Csound modes for Emacs, initially extending the existing modes written by John ffitch. It made it possible there again to drive Csound (and specially its multiple variations and command line options) directly from Emacs.

Squeak/GeoMaestro

Emacs lacks many powerful features required for a comprehensive composition environment: notably graphics, and threads. This is provided by Squeak, along with its object philosophy. Squeak was the most comfortable place to build incredibly powerful GUIs, using Morphic. Now each piece of musical data can be grabbed and modified with the mouse, along with every element of the geometric representation pionneered by GeoMaestro for Keykit.

At the time of this writing, I am thus porting GeoMaestro and the Composer to Squeak, revisiting all related concepts as there are much more powerful ways to play with them in the Morphic world. But Squeak still uses Keykit as a MIDI engine.

By writing in Emacs a Squeak console very similar to the Keykit console, I also made it possible to use Squeak as a graphical environment associated to Emacs. This is a very early work, but its potentiality are huge.

Perspectives

Computer music is involved with the manipulation of huge quantities of very heterogeneous data. The most difficult point is to keep contact with the musical aspect of the data, I mean having representations of it which are "warm" (intuitive, musically meaningful, easy to grab, change and assemble) as opposed to "cold" (cold are lists of numbers, obfuscated code or 128-sliders banks/50 knobs GUIs).

Realtime music with a computer is a nightmare, as for most people the sole mechanical entry points are a keyboard, a mouse and possibly a MIDI keyboard. In my experience, no musical feeling can be seriously developed with so poor an interaction (well I tried and made this with realtime operation of Reaktor synths), which is why I concentrate on non-realtime composition where responsiveness can be implemented by programming.

This area of my research will now happen mostly in Squeak, as GUIs are the most delicate points to develop. Some work is to be done with Emacs too, so that "writing" music gets more straighforward.

Another problem is having all different softwares communicate smoothly together, as it is very frustrating to have the composition process be interrupted too often in order to manipulate files, open new windows, rename things, start and stop anciliary programs.

In my current system a lot of programs such as sound players and MIDI synthesizers are already smoothly and transparently integrated to the high-level environments, that is Keykit, Emacs and Squeak. The next step is integrating those three together, so that a composition does not have to be done in one of them only, but can simply be shared among them. In this spirit, the main currently existing feature is the Emacs control of Keykit and Squeak via TCP/IP. This is still too pyramidal, though, and it would be very nice to have a more equanimous approach, possibly using Erlang nodes in order to constitue a network of communicating musical worlds. But this is another story...

Music

Designing composition tools is great fun, but music is the ultimate aim !

At this point I have not much to say, except that all I did is freely available from this page:

http://www.zogotounga.net/GM/TGG/TGG.htm

That´s all folks !

back to the main software page